Agentic AI Inference Sizing

Understanding Pricing for AI Agents

1. Introduction

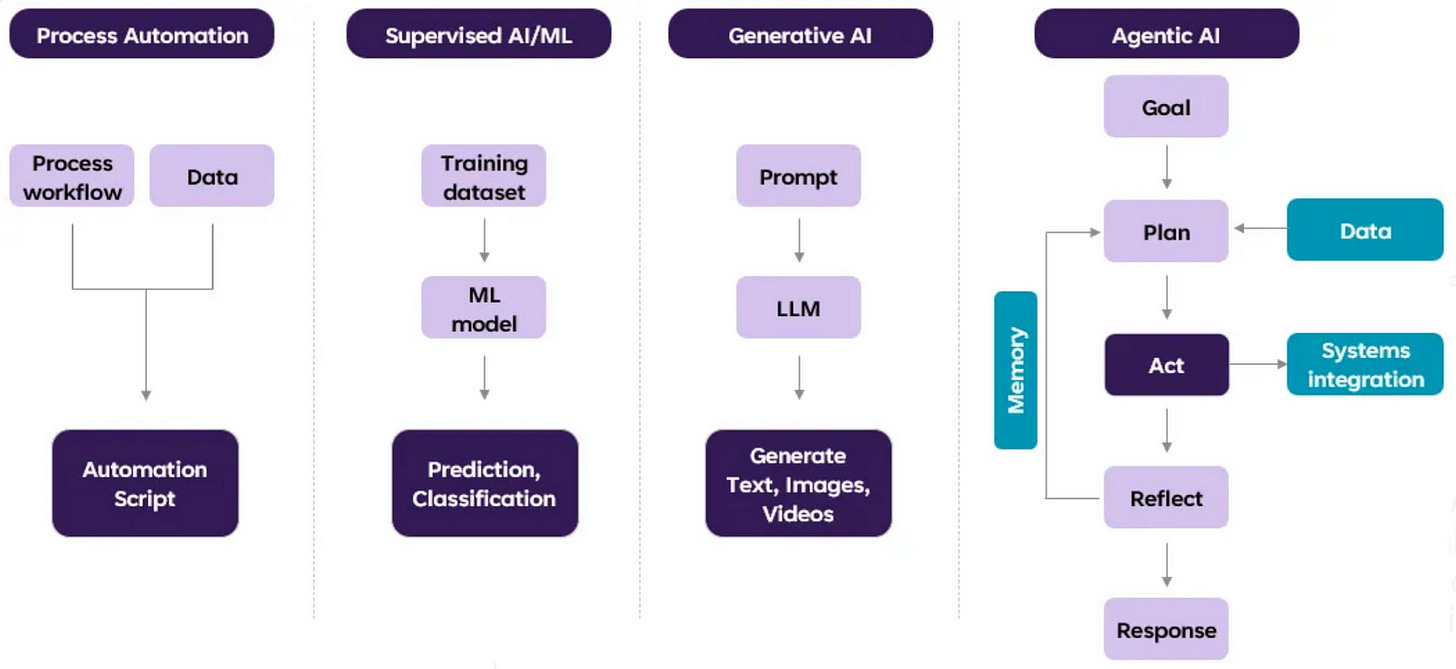

The discussion around ChatGPT (in general, generative AI), has now evolved into agentic AI. While ChatGPT is primarily a chatbot that can generate text responses, AI agents can execute complex tasks autonomously, e.g., make a sale, plan a trip, make a flight booking, book a contractor to do a house job, order a pizza. The figure below illustrates the evolution of agentic AI systems.

Bill Gates recently envisioned a future where we would have an AI agent that is able to process and respond to natural language and accomplish a number of different tasks. Gates used planning a trip as an example.

Ordinarily, this would involve booking your hotel, flights, restaurants, etc. on your own. But an AI agent would be able to use its knowledge of your preferences to book and purchase those things on your behalf.

While the benefits of AI agents are evident, they also come with a steep price tag :) In this article, we deep-dive on how to price / size agentic AI systems orchestrating multiple LLM agents.

We first consider the dimensions impacting LLM inference sizing, e.g.,

input and output context windows,

model size,

first-token latency, last-token latency;

throughput.

We then extrapolate the dimensions to agentic AI:

mapping token latency to latency of executing the first agent versus the full orchestration;

considering the output of (preceding) agent together with the overall execution state / contextual understanding as part of the input context window size of the following agent;

accommodating the inherent non-determinism in agentic executions.